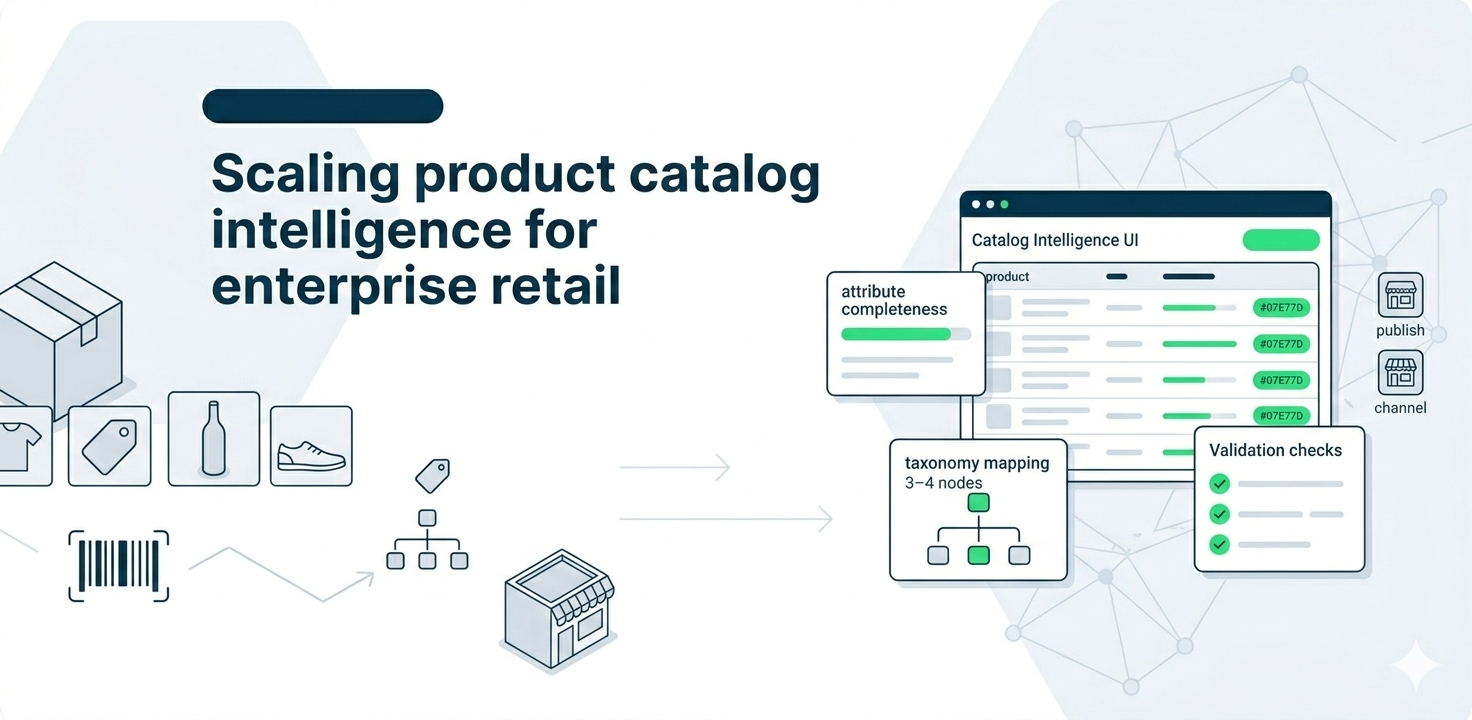

Enterprise retailers and marketplaces don’t stall because they lack products—they stall because they can’t catalog products fast enough with clean, consistent, attribute-rich data. We helped an enterprise retail organization revamp its data architecture to reliably manage and serve a very large-scale product-attribute dataset (approx. 750M attribute records)—so e-retailers and marketplaces could close catalog gaps and expand product offerings faster.

At a glance:

- Industry: Enterprise Retail / Marketplaces

- Core problem: Catalog gaps and slow product onboarding due to fragmented, inconsistent attribute data

- What we delivered: A scalable product intelligence data architecture + pipelines to manage and serve high-volume attribute data

- Primary impact: Faster catalog expansion, improved data consistency for listings, and reduced friction in product onboarding workflows

- Core stack: AWS engineering services, MongoDB, PostgreSQL, BigQuery, PHP

The challenge: product growth was constrained by catalog data quality and throughput

The client needed to deliver comprehensive product information—deep attributes, consistent taxonomy, and accurate mappings—at a scale that traditional manual cataloging or ad-hoc scripts couldn’t support. Marketplace teams were experiencing catalog gaps that slowed product expansion and created inconsistent product experiences across listings.

What we set out to solve:

- Create a scalable architecture for managing massive product attribute volume

- Reduce inconsistency in taxonomy, attributes, and mappings across categories

- Improve catalog “publish readiness” with validation and standardization

- Enable faster product onboarding for e-retailers and marketplaces

- Build a repeatable system that can expand across categories without rework

“Catalog scale isn’t a merchandising problem. It’s a data architecture problem.”

What “good” looked like (success criteria)

We aligned success criteria around outcomes that catalog, data, and platform teams could validate operationally: speed of onboarding, consistency of attributes, and stability as volumes grow.

Success criteria:

- Scale: Support hundreds of millions of attribute records reliably

- Consistency: Standardized attributes and taxonomy across categories

- Quality: Validation checks that prevent bad catalog data from being published

- Throughput: Faster onboarding and expansion of product offerings

- Maintainability: Clean, extensible pipelines (not fragile one-off scripts)

Solution overview

We implemented a scalable product intelligence foundation that combined resilient storage, structured relational modeling where needed, and analytics-friendly serving patterns. The architecture supported ingestion, normalization, enrichment, validation, and downstream consumption—so teams could expand catalog coverage without sacrificing data integrity.

1. High-volume product attribute ingestion and storage

We designed the ingestion layer to reliably handle very large attribute datasets—supporting continuous growth in categories and attribute depth. This reduced operational fragility and ensured new data could be incorporated without breaking downstream processes.

2. Normalization + catalog standardization (taxonomy, mappings, and consistency)

The core of catalog scale is consistency. We implemented standardized structures for attributes and taxonomy so the same product category doesn’t behave like “200 different systems.” This made catalog outputs more predictable across marketplaces and improved listing quality.

3. Quality gates and publish readiness checks

We introduced validation logic to catch issues early—missing critical attributes, invalid values, inconsistent mappings, or category-rule violations—so only publish-ready product information flowed forward. This reduced downstream rework and improved trust in the data.

4. Serving layer for downstream catalog expansion workflows

We structured outputs so downstream systems and teams (catalog ops, marketplace integrations, onboarding workflows) could consume consistent product information quickly—enabling faster website assortment expansion and reducing manual back-and-forth.

Implementation playbook

We executed this as a controlled modernization: first stabilize the data model and rules, then scale ingestion and processing, then operationalize quality gates and serving patterns—so the catalog system improved without disrupting business operations.

- Phase 1: Discovery + catalog rules mapping — taxonomy, attribute standards, and “must-have” rules by category

- Phase 2: Data foundation build — scalable ingestion + storage + curated structures

- Phase 3: Quality + validation — publish readiness gates and exception reporting

- Phase 4: Serving + enablement — downstream access patterns and operational rollout

Impact

- Closed catalog gaps by delivering more complete, attribute-rich product information

- Accelerated catalog expansion as marketplaces could onboard products faster

- Improved data consistency across categories and listings (fewer attribute mismatches)

- Reduced rework through validation gates and predictable publish-ready outputs

- Scalable foundation for continuous category and assortment growth

Technology stack

- AWS engineering services — infrastructure and data engineering foundation

- MongoDB — flexible storage for semi-structured product data patterns

- PostgreSQL — structured relational modeling where consistency and joins matter

- BigQuery — analytics layer for large-scale querying and analysis

- PHP — application/integration components supporting consumption workflows and performance

- Power BI — control tower dashboards and drilldowns

Need to scale your catalogue without compromising quality?

We design a product intelligence foundation to automate onboarding and maintain quality as your marketplace scales.

Frequently Asked Questions

Why do catalog gaps happen even when retailers have product data?

Because the data is often incomplete, inconsistent across sources, or not standardized to a taxonomy that marketplaces can reliably publish. The problem is usually data structure + validation + workflow—not “lack of records.”

What’s the biggest technical challenge in product attribute platforms at scale?

Managing consistency and quality as volume grows. Without strong normalization rules and publish readiness checks, attribute sprawl creates unreliable listings and constant manual cleanup.

How do you prevent the architecture from becoming fragile over time?

By designing repeatable pipelines, enforcing versioned rules/taxonomy standards, and building exception-first monitoring—so changes don’t silently break downstream listings.